in-memory analytics

What is in-memory analytics?

In-memory analytics is an approach to querying data that resides in a computer's random access memory (RAM) as opposed to querying data that is stored on physical drives. Doing this results in vastly shortened query response times, allowing business intelligence (BI) and analytic applications to support faster business decisions.

In conventional data processing, data is stored on a hard disk and then called into the RAM when required. It is then processed by the CPU, which generates the required outputs. The problem with this method, which has been in use for years, is that it can be slow. The seek time that passes between calling the data from the hard disk and making it available to the CPU can become a bottleneck for modern-day analytics applications that require low latency and fast data access.

In-memory analytics means making data available in the memory for analytics instead of having to access it repeatedly from where it is stored on a storage system comprising conventional hard disks or solid-state drives. This approach takes advantage of in-memory processing power to reduce latency as well as enable fast data processing and speedier results.

In-memory analytics leverages two technological approaches to reduce latency and speed up data access and processing:

- Columnar data storage. In a columnar database, data in the memory is stored in a linear, one-dimensional format instead of in a two-dimensional (rows and columns) format.

- Massively parallel processing. The use of multiple processors working in a coordinated fashion lets databases handle large amounts of data and generates faster analytics.

Why is in-memory analytics important?

Today, organizations in every industry rely on vast quantities of data to inform their operations, strategies and decisions. In addition to high volumes, they also have to contend with two other aspects of data:

- Variety. Different types of data are generated in both structured and unstructured formats.

- Velocity. Data is generated at high speeds.

All this big data must be properly stored, processed, analyzed and distributed to help organizations capture the insights that transform raw data into useful information.

But to do so, the data must be easily available for analysis. Users should be able to access the data they need and capture insights from it to guide their actions and decisions. Conventional data storage architectures such as hard disks or even solid-state drives don't necessarily support these needs due to sometimes lengthy seek times. Modern-day big data requires fast access and analysis.

Benefits of in-memory analytics

In-memory analytics supports faster data-driven decision-making and problem solving because the data used by applications is stored within the main memory rather than on the storage media. When data remains available in the RAM as an in-memory database, users and analytics applications can access the data faster. Moreover, it lets multiple users perform interactive data processing for analytics purposes without having to suffer the speed constraints of conventional storage.

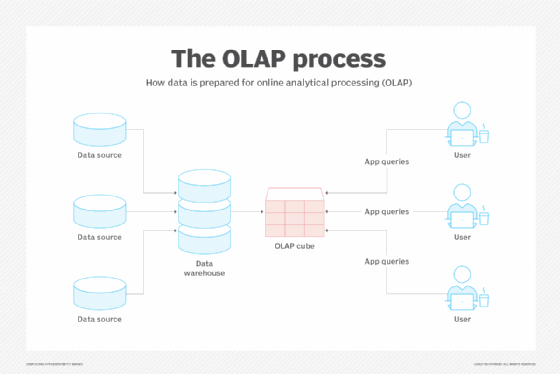

In addition to providing fast query response times, in-memory analytics can also reduce or eliminate the need for data indexing and storing pre-aggregated data in OLAP cubes or aggregate tables. This reduces IT costs as well as allows faster implementation of BI and analytic applications. As BI and analytic applications embrace in-memory analytics, it's likely that traditional data warehouses might be used only for data that is not queried frequently.

Some other benefits of in-memory analytics include the following:

- Data from multiple sources can be integrated to provide a comprehensive view and single source of truth to all authorized users.

- A data-driven culture is encouraged where actions and decisions are based on solid data rather than unclear assumptions or incomplete information.

- Real-time analytics help organizations make better and more timely decisions.

The rising popularity of in-memory analytics

As RAM costs decline, in-memory analytics is becoming feasible for many businesses. Falling dynamic RAM costs are a particularly welcome development for organizations that work with large volumes of data.

BI and analytic applications have long supported caching data in RAM, but older 32-bit operating systems provided only 4 gigabytes of addressable memory. Newer 64-bit operating systems, with up to 1 terabyte of addressable memory and perhaps more in the future, have made it possible to cache large volumes of data -- potentially an entire data warehouse or data mart -- in a computer's RAM.

A data warehouse helps organizations support their BI and analytics activities. With in-memory analytics, they can explore the relationships between data in the warehouse as well as create and refine their analytical models for a wide range of applications.

In the coming years, more companies will use in-memory analytics to enable fast processing of vast and growing quantities of data and to generate valuable insights. Still others will leverage in-memory predictive analytics for use in various business functions, such as marketing, sales, finance, cybersecurity, operations and more.

Check out top business benefits of real-time data analytics and top predictive analytics tools. Find out what you need to know to effectively manage data in today's enterprise. Explore top BI tools and how to choose the right one.