processing in memory (PIM)

What is processing in memory?

Processing in memory, or PIM (sometimes called processor in memory), refers to the integration of a processor with Random Access Memory (RAM) on a single chip. The result is sometimes known as a PIM chip.

PIM allows computations and processing to be performed within the memory of a computer, server or similar device. The arrangement speeds up the overall processing of tasks by performing them within the memory module.

How processing in memory works

Standard computer architectures suffer from a latency problem. This latency is also known as the von Neumann bottleneck and is the result of all processing being done by the processor alone. The computer's memory is not involved in processing, as it is used only to store programs and data. PIM is one approach to overcome this inherent latency issue.

In a computing system or application, when the volume of data is large, moving it between memory and processors can slow down processing. Adding processing directly into memory can help alleviate this problem. PIM can manipulate data entirely in the computer's memory. The application software running on one or more computers manages the processing and the data in memory.

If multiple computers are involved, the software divides the processing into smaller tasks. These tasks are then distributed to each computer and run in parallel to reduce latency and speed up processing.

Difference between processing in memory and database processing

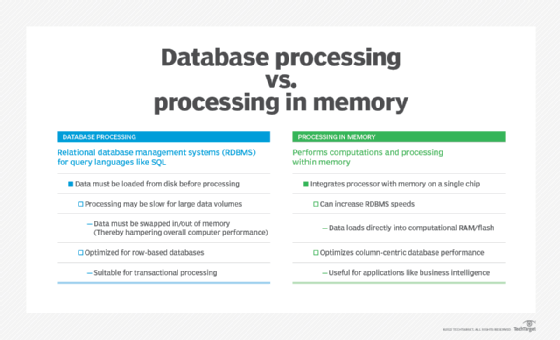

Database processing refers to relational database management systems (RDBMS) structured to allow the use of query languages like SQL. With RDBMS processing, the data is first loaded from the disk so that it can be processed.

If data volumes are large, processing may slow down as data has to be swapped in and out of memory, which could also hamper overall computing performance. Processing in memory can increase RDBMS processing speeds by loading data directly onto the RAM or flash memory that also has computational capabilities.

Another difference between the two methods concerns the use of databases. Database processing is optimized for row-based databases, while PIM depends on column-centric databases. Row-based storage is suitable for transactional processing, but not for applications like business intelligence (BI), where column-based databases are more suitable. This is why PIM is so useful for such applications.

Advantages of processing in memory

Some of the most important advantages of processing in memory include the following:

- Faster data processing. In comparison to standard drive-based processing, working on data directly stored in RAM or flash memory removes bottlenecks and increases overall processing speed. It also increases data transfer speeds between PIM chips and storage.

- PIM applications. Since it offers high-speed processing, PIM is often described as Real Time, which makes it particularly beneficial for real-time or near real-time applications and use cases such as the following:

- computer vision (e.g., in self-driving cars);

- streaming video;

- artificial intelligence; and

- machine learning and deep learning.

Other applications that need high-speed processing are also suitable candidates for PIM, such as the following:

- predictive maintenance

- payment processing

- fraud detection

- algorithmic trading

In addition, applications where high-speed processing is not necessary but desirable can also benefit from PIM. Examples of such applications include the following:

- BI dashboards

- ad hoc data querying

- Extract-Transform-Load (ETL) workloads

Challenges in achieving high-speed processing in memory

Despite its benefits, there are also some drawbacks related to PIM, particularly related to design and manufacturing.

- Design challenges. PIM requires adopting new approaches to chip It also requires sophisticated parallel design to accommodate a near-memory queue, as well as a deeper understanding of how the various types of memory can be used to design a PIM system.

- Manufacturing challenges. From the manufacturing standpoint, it is crucial to reduce the physical distance that signals must travel between memory and logic, which may increase costs or create thermal issues.

- Reliance on memory. Another drawback concerns the very feature that makes PIM memorable: its reliance on memory. If the computing system, particularly its RAM or flash memory is damaged, it can lead to data compromise.

- Cost. Memory-based systems are more expensive than traditional computing architectures. The cost can be prohibitive for smaller companies and low-profile applications, but may not be an issue for larger organizations with massive data warehouses or big application budgets.

See also how the computational storage drive is changing computing and Keys to making computational storage work in your applications. Explore Using computational storage to handle big data and Computational storage: Go beyond computation offloading.